AI Song Maker Reshaping How Ideas Become Finished Songs

When people first approach music creation, the barrier is rarely inspiration. It is execution. Turning a rough idea into a structured, listenable track requires tools, time, and technical fluency that most creators do not naturally have. This gap is exactly where tools like AI Song Maker begin to matter. They do not replace creativity. They remove friction between imagination and output.

In my observation, what makes this shift significant is not just speed. It is the way control is redistributed. Instead of thinking in terms of chords, mixing chains, or DAWs, users begin with intent: mood, tone, structure, narrative. The system translates those into musical form. That shift, subtle as it sounds, changes who can participate in music creation.

Rather than forcing users into a traditional production pipeline, the platform reorganizes the process around inputs that feel more natural. Lyrics, descriptions, and stylistic direction become the starting point. The result is not just a tool, but a different mental model for creating music.

Why Text Driven Music Creation Changes Creative Entry Barriers

Traditional music workflows are layered and sequential. Composition leads to arrangement, which leads to recording, then mixing, then mastering. Each step requires specialized knowledge.

AISong’s approach compresses this structure into a single interaction layer. The user provides intent, and the system handles translation.

From Technical Steps To Intent Driven Inputs

Instead of asking how to produce a sound, the platform asks:

- What mood should the song carry

- What genre or style should it resemble

- What kind of vocal presence is expected

- Whether the output should be instrumental or lyrical

This aligns more closely with how non-professional creators think. In my testing, this reduces hesitation. Users are less concerned about “doing it right” and more focused on describing what they want.

How Multiple Models Expand Creative Variation

The system supports multiple underlying model versions. This is not always visible at the surface level, but it becomes clear when comparing outputs.

Different models tend to interpret prompts slightly differently:

- Some emphasize structure and clarity

- Others prioritize texture and atmosphere

- Certain versions handle vocals more consistently

This layered model approach means the same input can produce different musical interpretations, which is useful when exploring creative directions rather than optimizing for a single result.

How AISong Generates Full Tracks From Minimal Inputs

The core mechanism is relatively straightforward, but the implications are broader.

Understanding The Role Of Prompt And Lyrics Input

AI Song Generator typically accepts two main types of input:

- Short descriptive prompts

- Full lyrical content

When only a prompt is provided, the system constructs:

- Song structure

- Melody progression

- Instrumental layering

When lyrics are included, the system additionally aligns:

- Vocal phrasing

- Rhythm matching

- Emotional emphasis across sections

In practice, lyrics-driven generation tends to feel more intentional, while prompt-driven generation is more exploratory.

How Structure And Arrangement Are Inferred Automatically

One of the more interesting aspects is how the platform builds song structure without explicit instruction.

In most outputs I tested, the system implicitly creates:

- Intro segments

- Verse and chorus transitions

- Repetition patterns

This suggests that the model is not just generating sound, but mapping learned musical conventions into each output.

Why Output Feels Production Ready In Many Cases

The platform positions outputs as close to finished tracks. While this varies depending on input quality, many results include:

- Balanced instrumental layers

- Consistent vocal presence

- Defined section transitions

It does not fully replace professional mixing, but it reduces the gap significantly compared to raw generative audio.

Step By Step Workflow Based On Actual Platform Flow

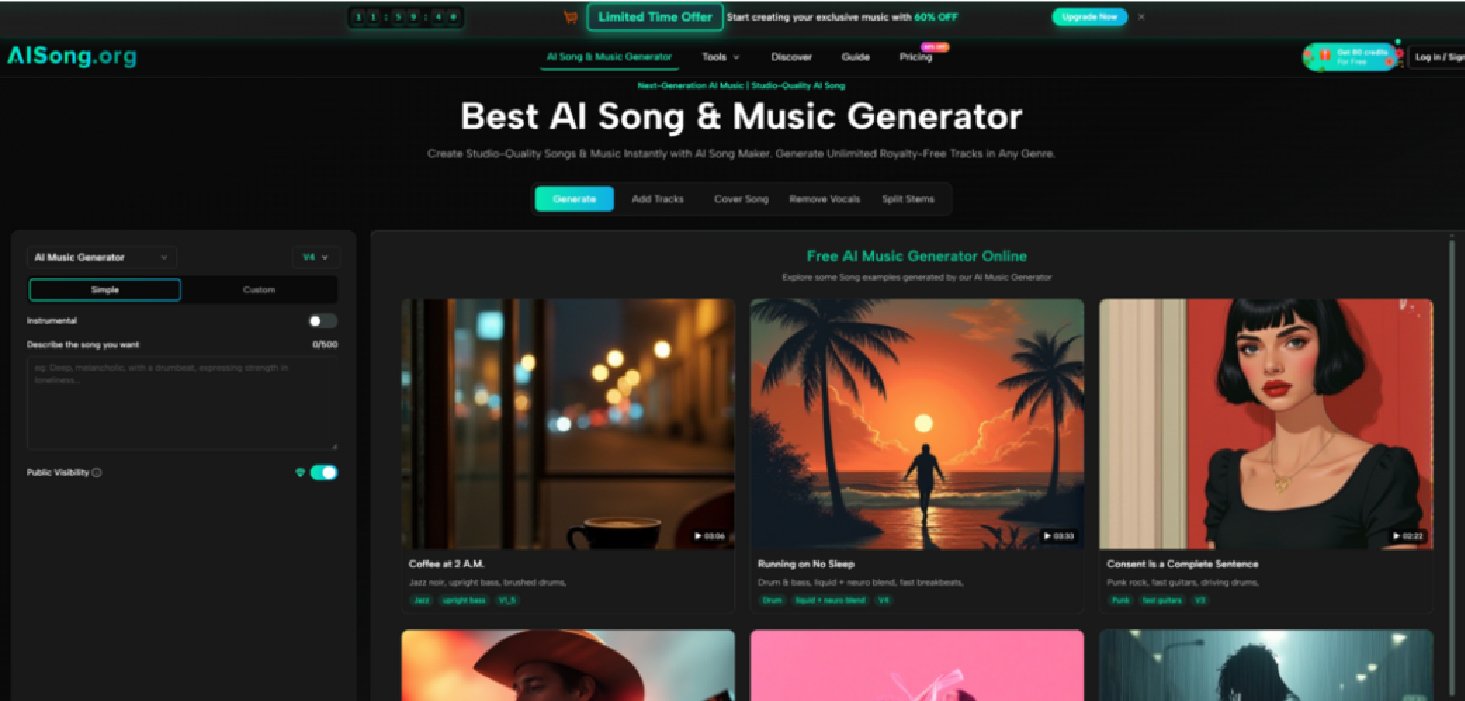

Step 1 Enter Generator And Choose Creation Mode

Users begin by selecting between simplified generation and more controlled creation modes. The simplified mode focuses on speed, while the advanced mode allows more detailed inputs such as lyrics and style parameters.

Step 2 Provide Prompt Or Full Lyrics With Style Settings

The next step involves entering:

- Song title or concept

- Style or genre description

- Optional lyrical content

- Additional attributes like tempo or mood

This stage defines how the system interprets the request.

Step 3 Select Model And Generate Track Using Credits

The platform operates on a credit system. Once parameters are set, users trigger generation, and the system processes the request through one of its available models.

Step 4 Refine Output Through Editing And Expansion Tools

After generation, users can:

- Extend the track duration

- Separate vocals and instrumentals

- Add missing elements such as backing tracks

This stage turns a single output into an editable asset rather than a static result.

Comparing AISong Workflow With Traditional Music Production Paths

| Aspect | AISong Approach | Traditional Workflow |

| Entry Requirement | Minimal, description-based | High, technical knowledge required |

| Time To First Output | Minutes | Hours to days |

| Control Method | Prompt and parameter driven | Manual composition and editing |

| Iteration Speed | Rapid, low cost per attempt | Slower, resource intensive |

| Output Readiness | Semi-finished to near complete | Depends on full production chain |

This comparison highlights not superiority, but a shift in where effort is placed. AISong reduces production complexity while increasing reliance on input clarity.

Where This Model Fits In Real Creative Scenarios

Content Creators Requiring Fast Background Music

Short-form video creators often need multiple tracks quickly. In these cases, speed matters more than perfect customization.

AISong allows rapid iteration, making it suitable for generating multiple usable variations without deep production work.

Writers And Storytellers Adding Audio Layers

Writers experimenting with audio storytelling can convert narrative ideas into musical forms without switching tools.

Lyrics-based generation becomes especially relevant here, as it aligns directly with written content.

Musicians Prototyping Ideas Before Full Production

For musicians, the platform can act as a sketchpad. Instead of building a track from scratch, they can generate a base and refine it externally.

This does not replace traditional tools, but it accelerates early-stage exploration.

Limitations That Affect Output Consistency And Control

Prompt Quality Strongly Influences Final Results

Inconsistent prompts lead to inconsistent outputs. Vague descriptions often produce generic tracks.

Clear direction improves results significantly, which means users still need to develop prompt clarity over time.

Multiple Generations May Be Required For Precision

While generation is fast, achieving a specific vision may require several attempts.

This trade-off shifts effort from production time to iteration cycles.

Fine Grained Control Remains Limited Compared To DAWs

Compared to professional tools, detailed adjustments such as exact timing, mixing levels, or instrument placement are still constrained.

For highly specific production needs, external tools remain necessary.

Why This Shift Matters Beyond Individual Tools

The broader implication is not about one platform. It is about how creative systems are evolving.

Music creation is gradually moving from:

Skill constrained execution to Intent driven generation.

This does not eliminate expertise. It changes where expertise is applied.

Understanding how to describe, guide, and refine becomes as important as knowing how to produce.

In that sense, AISong is less about automation and more about translation. It translates human intent into structured sound. And for many creators, that translation is the missing step that makes music creation accessible in the first place.